- What is Crawl Anomaly and what does this mean in Google search console :

- Failed Crawl Anomaly Error Means :

- How to Fix Crawl Anomaly Error:

- Reasons for Crawl Anomaly Error in Search Console

- Errors which Cause Crawl anomaly:

- Errors Levels which fall in Crawl Anomaly:

- After Crawl Anomaly Error URls De-indexing (De-indexed)?

What is Crawl Anomaly and what does this mean in Google search console :

In Google search console crawl anomaly error is an unspecified anomaly error occurred while Google Bot crawling your website or URL throwing an error level of 400 or 500 levels which results in anomaly error and expected to be a 200 ok response without any server errors or 404 error.

Failed Crawl Anomaly Error Means :

Fetch failed crawl anomaly error means the fetched webpage or url faced an error level of 4xx error or 5xx level error response code and Google bot left the page leaving behind not crawling the url and flagged as crawl anomaly error in Google search console and the page will not be a part of Google indexed until crawl anomaly error is resolved as Google bot facing problem while fetching and downloading the document.

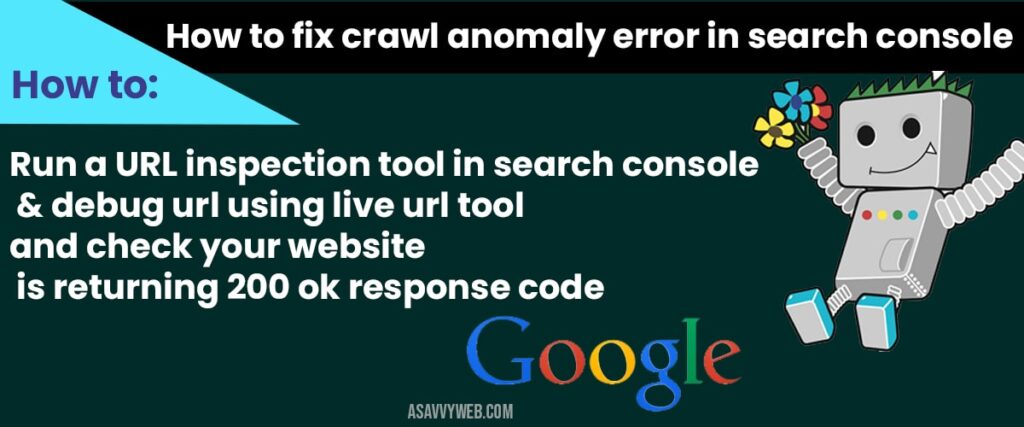

How to Fix Crawl Anomaly Error:

IF you see your website facing crawl issue refers to a fetch failed crawl anomaly error then you need to check with you server logs first and see why crawl anomaly error occurred and figure out which type of error level response code i.e. 4xx level or 5xx level error. To do this the best way is debugging crawl anomaly error by url inspection tool and url inspection tool gives you all details about your webpage and tells you how google bot crawled the webpage including http response code,page is mobile friendly, chrome browser issues, snapshot of how google bot sees your webpage and how google bot sees your webpage, canonical url, google declared canonical, AMP issues and everything.

Ways to fix crawl Anomaly error make sure you make your webpage crawl by Google by submitting to Fetch as Google and see whether the URL is returning 200 ok response or not.

Mostly by submitting or fetch as Google the URL or request indexing will be crawled successfully and gets indexed. By doing a fetch as Google check with the headers of the webpage it is returning what Google is expecting to be returned.

Solution for Crawl Anomaly 400 level error

If it is with the 404 then you need to check any redirection happening with the URL which is taking too long to return 200 ok response codes, which sometimes have multiple redirection loops.

Solution for Crawl Anomaly 500 level error

If it’s a 500 server error then you need to make sure your website fast by reducing number of requests to the server which is making or slowing down Google Bot to take time and return a 500 error which is at server level and let Google bot crawl the webpage fast.

Reasons for Crawl Anomaly Error in Search Console

The main reason why fetch will be failed and raise crawl anomaly error in Google search console is Google doesn’t want to keep pages which take long time to load or time spent downloading the page is too high and Google is making web faster with mobile first indexing which means the mobile version of the webpage should load very fast so that user get what he is looking for in Google search index.

Many of the website owners are seen facing this Google crawl anomaly error when their website is moved and enable to mobile first indexing. Google says mobile first indexing is only the part of responsive and mobile friendliness at the same time Google also expects to return fast web pages from Google mobile version of index.

Related Crawl Errors Coverage:

1) Amp Crawl Issue Failed Crawl Anomaly Error in Search Console Fix

2) Types of Crawl Errors and How to Fix Crawl Errors in Google Search Console

3) How to Fix Crawl Errors in Google Webmaster Tools

4) Fix Submitted URL Has Crawl Issue Errors in Search Console

5) Fix Server Error 5xx Search Console

Errors which Cause Crawl anomaly:

The URL Error Anomaly Alerts Can Include:

1) Server error

2) Soft 404

3) Access denied

4) Not found

5) Not followed

6) Page is 404

7) Not Mobile friendly

8) Which makes your webpage or url loads slow

1) If its 404 then redirect the URL to related URL with 301 redirect

2) If its 500 internal server error makes sure you return a 300 ok response code.

Errors Levels which fall in Crawl Anomaly:

There are only two types of error levels which make your website URL gets de-indexed from Google search by flagging your website URLs in this category of Crawl Anomaly.

4xx level Errors:

Mostly it is with the 404 or access denied which makes Google unable to crawl the webpage (URL) and it can be accessed denied or access forbidden errors like 401, 403 etc

5xx level Errors:

Which makes Google not to crawl by crashing the server by overloading requests like 500 internal server error, 501 server implementation method etc.

After Crawl Anomaly Error URls De-indexing (De-indexed)?

After crawl anomaly error URLs will not be a part of Google index they will be dropped from Google search indexed as crawl anomaly error means Google is unable to fetch the URL and will be flagged as fetch failed crawl anomaly error.

If its 4xx level error or 5xx level error: Once Google search console faces trouble crawling your webpage the graph gets increasing, as once fetch fails and crawl anomaly, Google Bot falls back crawling your website and understands the server is getting overloaded and doesn’t want to crash server and flags other URLs in this category of error.

If there is any other unexpected headers returning then have a look at it and make Google bot crawl your webpage and get rid of crawl anomaly error.

When google bot tried to crawl the page and encountered 4xx level or 5xx level error, then it is flagged as crawl anomaly error in search console

Run a URL inspection tool in search console and debug url using live url tool and check your website is returning 200 ok response code correctly and see how google bot is seeing your page and fix if there are any server errors causing this crawl anomaly error

Without crawling there is no indexing and this may lead to little dip in traffic due to crawl anomaly error.